Few years ago we tried to use cheap SATA disks in HP disk enclosures as “cheap storage” (where information isn’t so important). Idea failed – none of disks tested supported TLER (~500x Samsung 1TB, ~200x Western Digital 1TB); and SmartArray controllers can’t handle such issues (disks tried to fix their issues for long time, controller decided that disk is dead, rebuild started, next disk dies, etc).

OK, TLER issue can’t be fixed (at least, without disk firmware disassembly), but what is causing disks to generate such amount of internal errors (1-2 disks in one 25-disk enclosure/week)?

Those disks are out-of service, so i disassembled few of them to check. So….

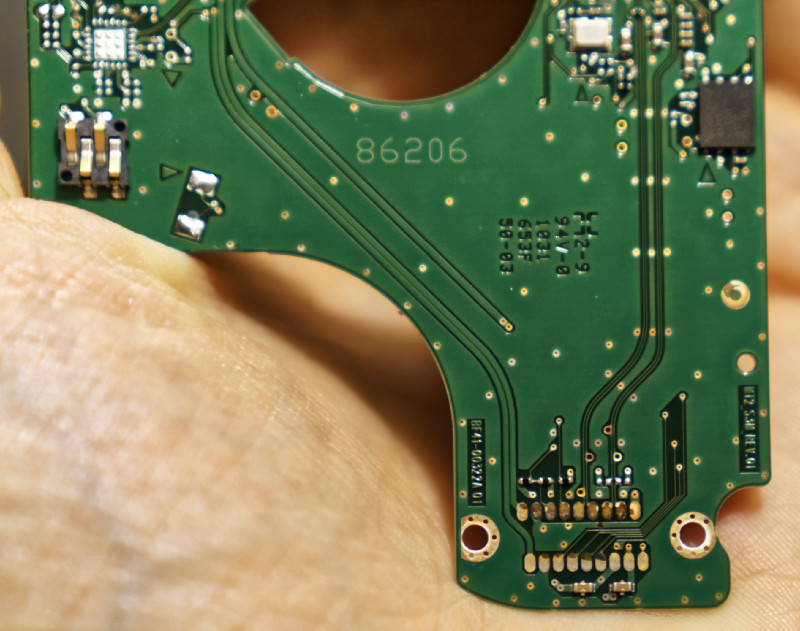

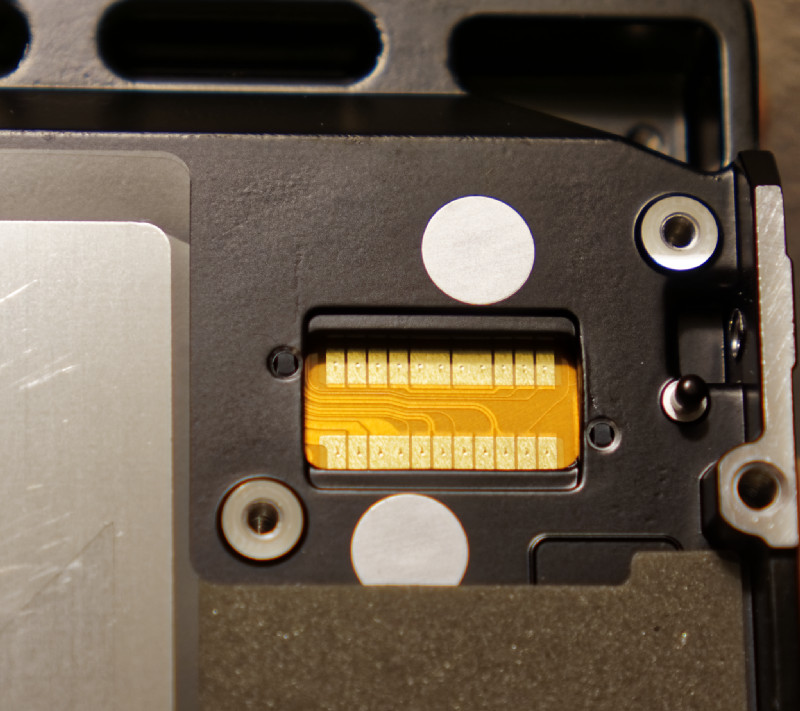

Samsung, 3-y old disk, used in datacenter environment (everything – temperature, humidity, dust – is tightly controlled). See contact pads on bottom from reading heads?

Motor pads are top-left – bigger current is here. But they are clean…. Data (head position feedback and control, data after pre-amp) pads are on bottom, we have very low current here, but they are corroded… Contact resistance is in range 0…50Ω (!). What?

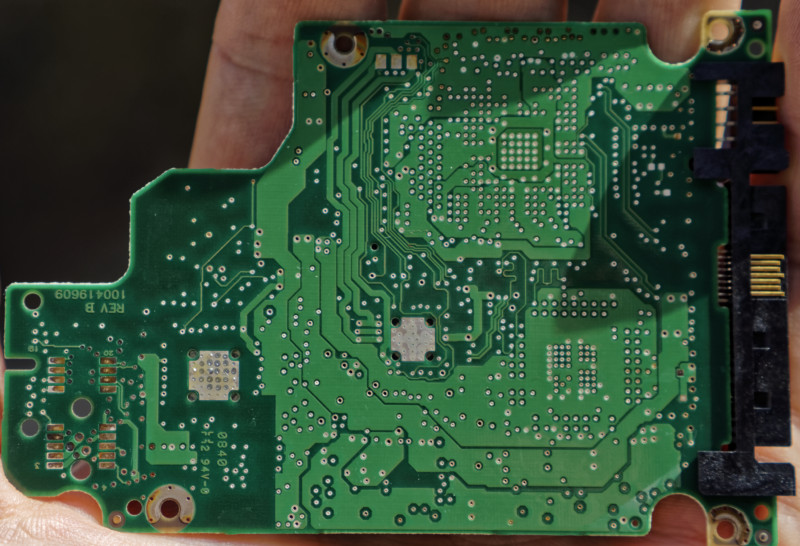

Tested SAS disk, which died some time ago (well, at least controller thinks so). This time – HP 72GB 2.5″. 7 year old….

Pads on left. Contact resistance 0..30Ω – a lot, but still better than Samsung (esp. considering disk age).

Working (but useless) disk – 8y old 146GB SAS. 8 years, check data pads:

Like new. And disk is still working….

Cleaned pads on one “failed” 1TB Samsung – working so far! =)

So, TL;DR – i think that at least some failures are caused by corroded contacts (probably bad design issue – different metals, incorrect pad thickness and so on). They aren’t so usefull now (too old), but if they corrode so much in 2-3y (in datacenter environment), they should die a lot in laptops… Check PCB pads (require T5 screwdriver), clean them and… Maybe HDD will live again?